Research Paper: *One* Good Game in 400: LLMs Can Describe Chess Rules But Just Can't Follow Them

Stuart Armstrong, Jocelyn Kelley, Rebecca Groman

Large language models (LLMs) are frequently credited with emergent reasoning and generalization capabilities. We present a simple empirical test of these claims: 400 games of chess played via self-play by Claude (Anthropic) and GPT-5 (OpenAI), using standard algebraic notation. The models played themselves and against each other. The games were designed to terminate on checkmate, stalemate, or when an impossible or illegal move was attempted, discarding any subsequent data.

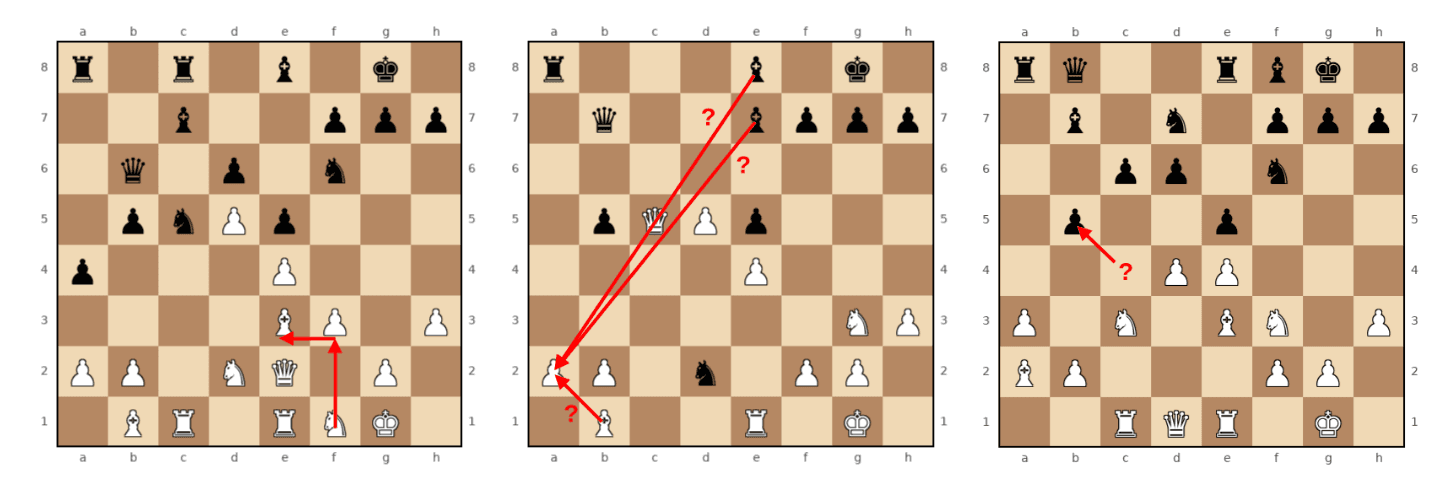

Despite the abundance of chess games and commentary in typical pretraining corpora, and despite the decades-long history of superhuman computer chess, only one of 400 games reached a checkmate or stalemate. The other 399 games ended with one of the models attempting an impossible or illegal move.

These findings provide a clean, reproducible diagnostic highlighting fundamental limitations in LLM state tracking and the gap between sequence-level pattern matching and genuine game-theoretic reasoning. They offer further evidence that language models lack the intrinsic ability to generate and employ world models. Given their abilities to describe the rules of chess but not follow them, this is evidence that LLMs don't understand chess concepts in the same way that humans do.

Link to Paper.

Link to GitHub Repo.